VIJIL DEPOT

Toughen agents during development with hardened LLMs, guardrails, and MCP proxy

Problem

You've built a powerful agent for finance, HR, legal, insurance, or travel. But you cannot convince business owners and risk officers to trust your agents in production.

Reliability

Your business owner asks: "How do I know when the agent will hallucinate? Can you give me test results for our particular real world use cases, not academic benchmarks and reassurances?"

Security

Your CISO asks: "Does your agent resist jailbreaks and prompt injections? What do you do to ensure confidentiality, integrity, and availability, other than bolt-on guardrails and cross your fingers?"

Safety

Your GRC team asks: "Does your AI agent meet EU AI Act, NIST AI RMF, and our org-specific policies? Do you validate the agent ? And what happens when the agent fails or is attacked in production?"

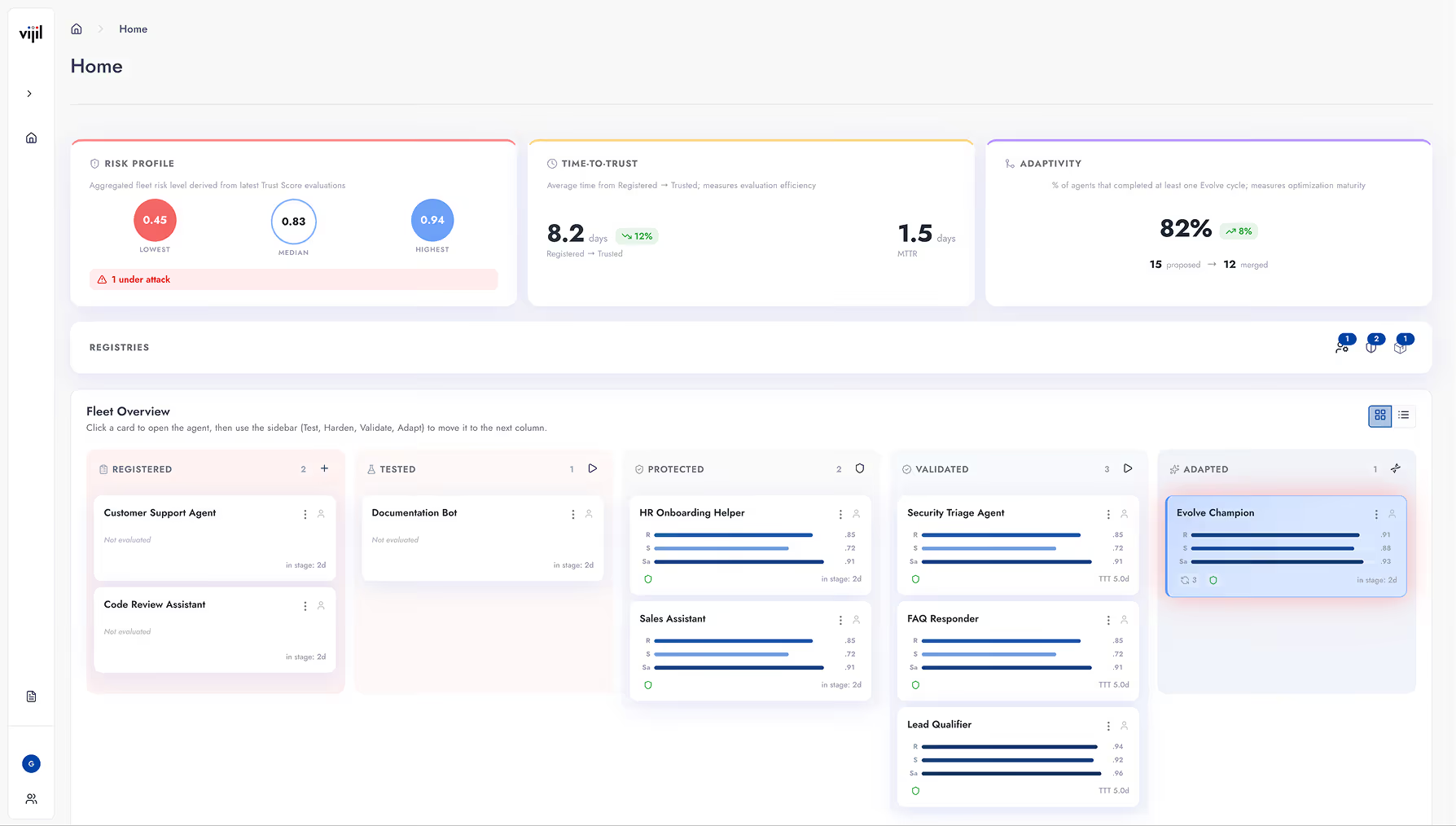

Vijil is the only platform purpose-built for trust in the entire agent development lifecycle: development, deployment, and continuous improvement.

Vijil Diamond measures technical risks tailored to each agent in its particular environment based on the agent spec, org policies, and user personas. Replace benchmarks with bespoke test cases.

Vijil Dome enforces organizational policies with mandatory controls built into the agent code, detecting failures with indiustry-leading accuracy, latency, and coverage with standard telemetry.

Vijil Darwin transforms failures into features. It analyzes traces, generates mutations, validates gains, and creates an evidence-backed patch for developers to merge into the agent code.

You need to ensure reward is above threshold

Vijil delivers agents you can trust in production. Learn More →

You want to improve reliability, security, and safety without reducing feature velocity.

Vijil is integrated in the most popular agent frameworks. Use it now.

You want the agent to meet a fiduciary duty of care and competence

You don’t have to put up with bias. Learn how Vijil adapts agents to users like you.

You need to ensure risk is below threshold

Don't just advocate for AI governance. Enforce mandatory policies. See how

garak is the open source vulnerability scanner that probes LLMs for hallucinations, data leakage, prompt injections, jailbreaks, misinformation, toxicity, and many other weaknesses.

Vijil runs garak so you can scan any LLM on any supported platform with one click. Vijil employees contribute to garak by testing and enhancing garak releases.

Vijil advances research and development of prompt injection detection by releasing its lightweight models based on DeBERTa and Modern BERT to open source.

Download our models from Hugging Face: https://huggingface.co/vijil.

Testimonials

Vijil has raised $23M to build a platform that makes AI agents more reliable, secure, and safe for enterprises.